Getting Started with OpenAI

OpenAI emerged as a leader in generative AI and is helping enterprises modernize their processes at a rapid pace. Get started with generative AI, OpenAI, and more!

Table of Contents

Artificial intelligence (AI) buzzes around the technology industry as the potential impact to consumers, enterprises, governments is immeasurable. OpenAI, a leading player in the AI space has introduced its platform and API which have opened up new possibilities in the field of artificial intelligence, enabling developers to harness the power of advanced models and integrate them seamlessly into their applications. In this article, we will delve into the basics of OpenAI, explore its wide range of use cases, and provide examples of how the API can be utilized to automate tasks, enhance user experiences, and revolutionize content creation. Whether you are a developer eager to unlock the potential of AI or simply curious about the future of natural language processing, this blog post will provide valuable insights into the world of the OpenAI API.

We will begin by introducing the OpenAI Platform including helpful links to get started. We’ll then discuss the components of the platform. Lastly, we’ll explore the API through examples via the OpenAI Playground and the API.

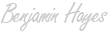

Navigating the OpenAI Platform

Documentation

OpenAI Documenation

Source: https://platform.openai.com/docs/introduction

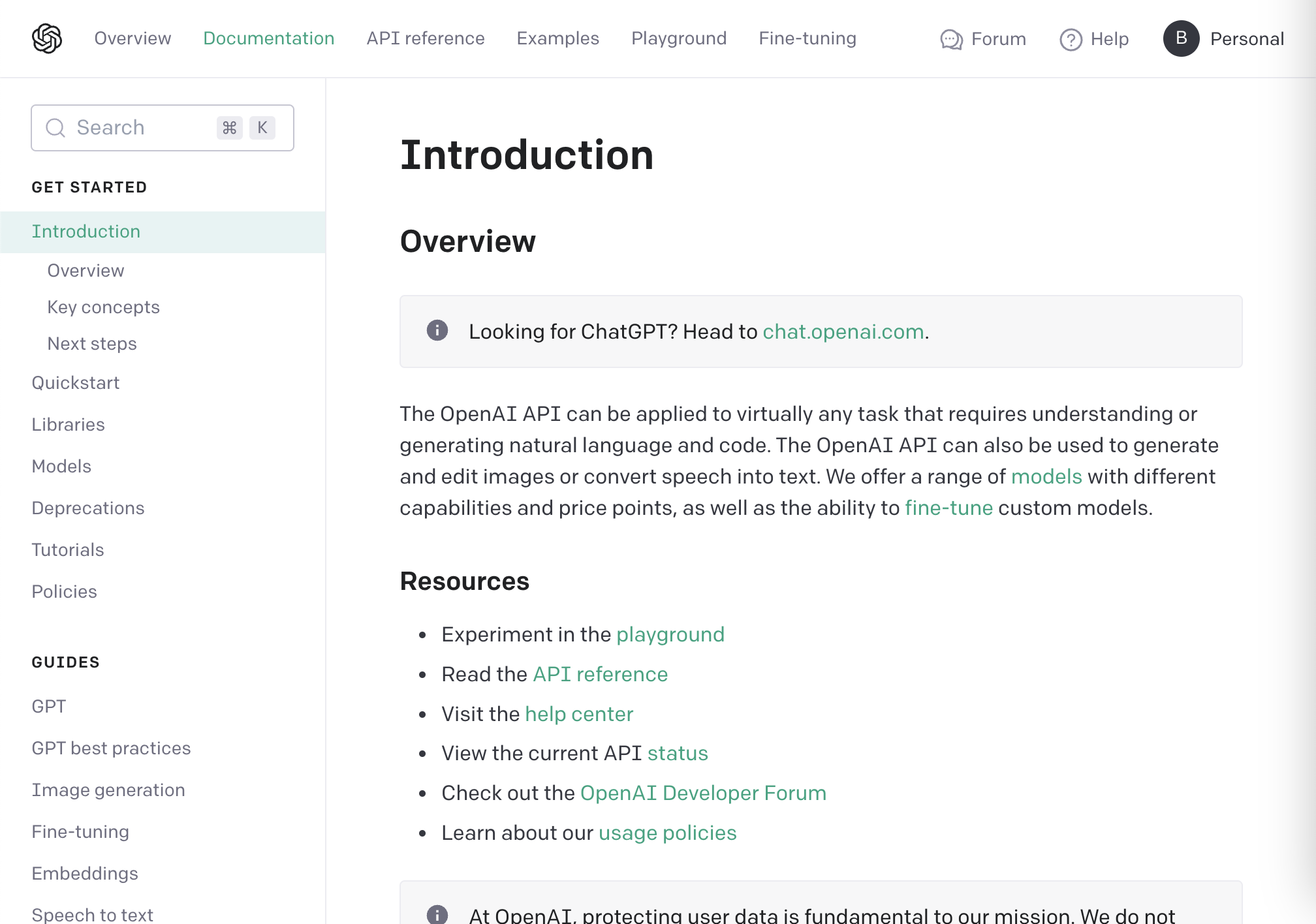

API Reference

OpenAI API Reference

Source: https://platform.openai.com/docs/api-reference

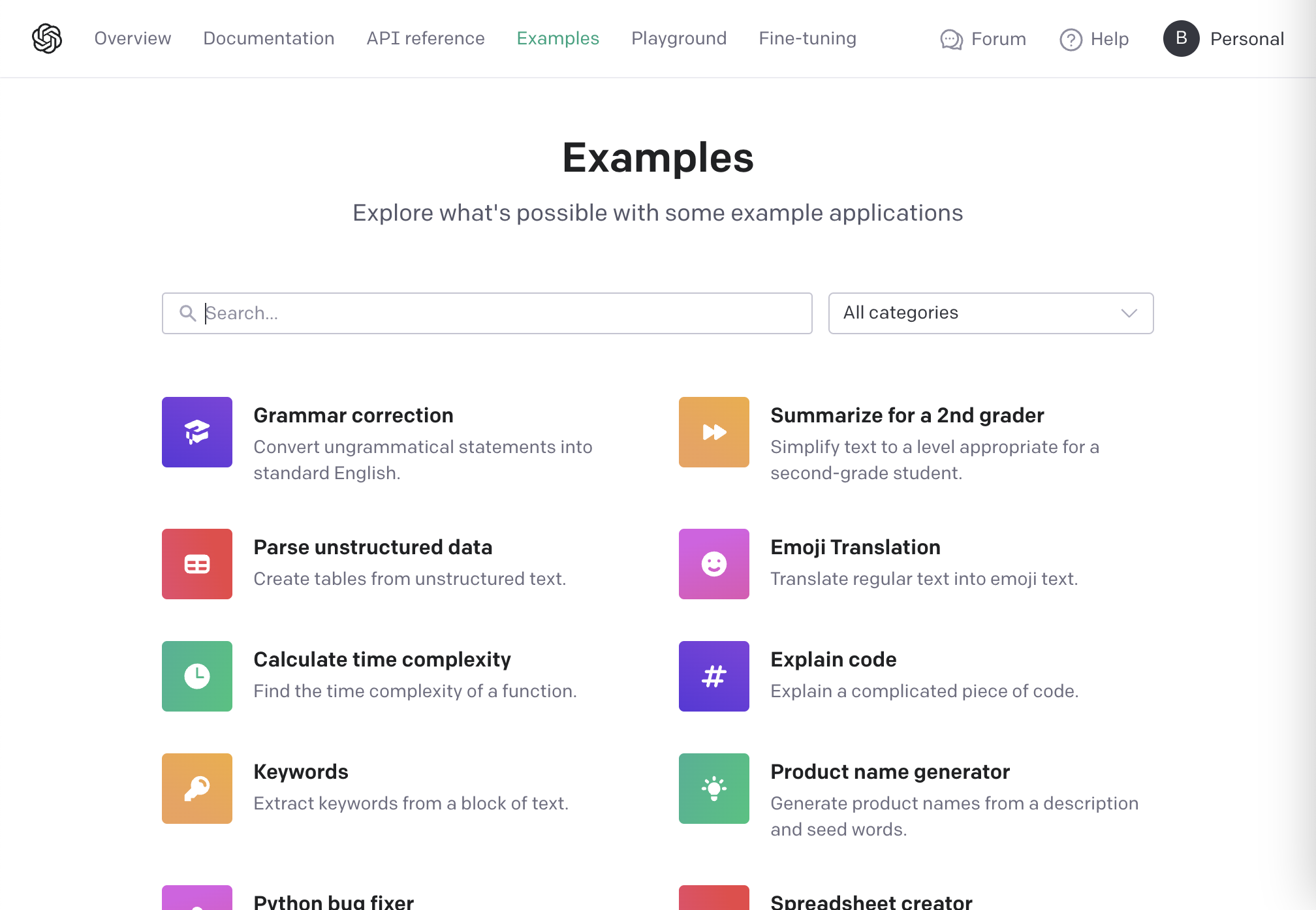

Examples

OpenAI Examples

Source: https://platform.openai.com/examples

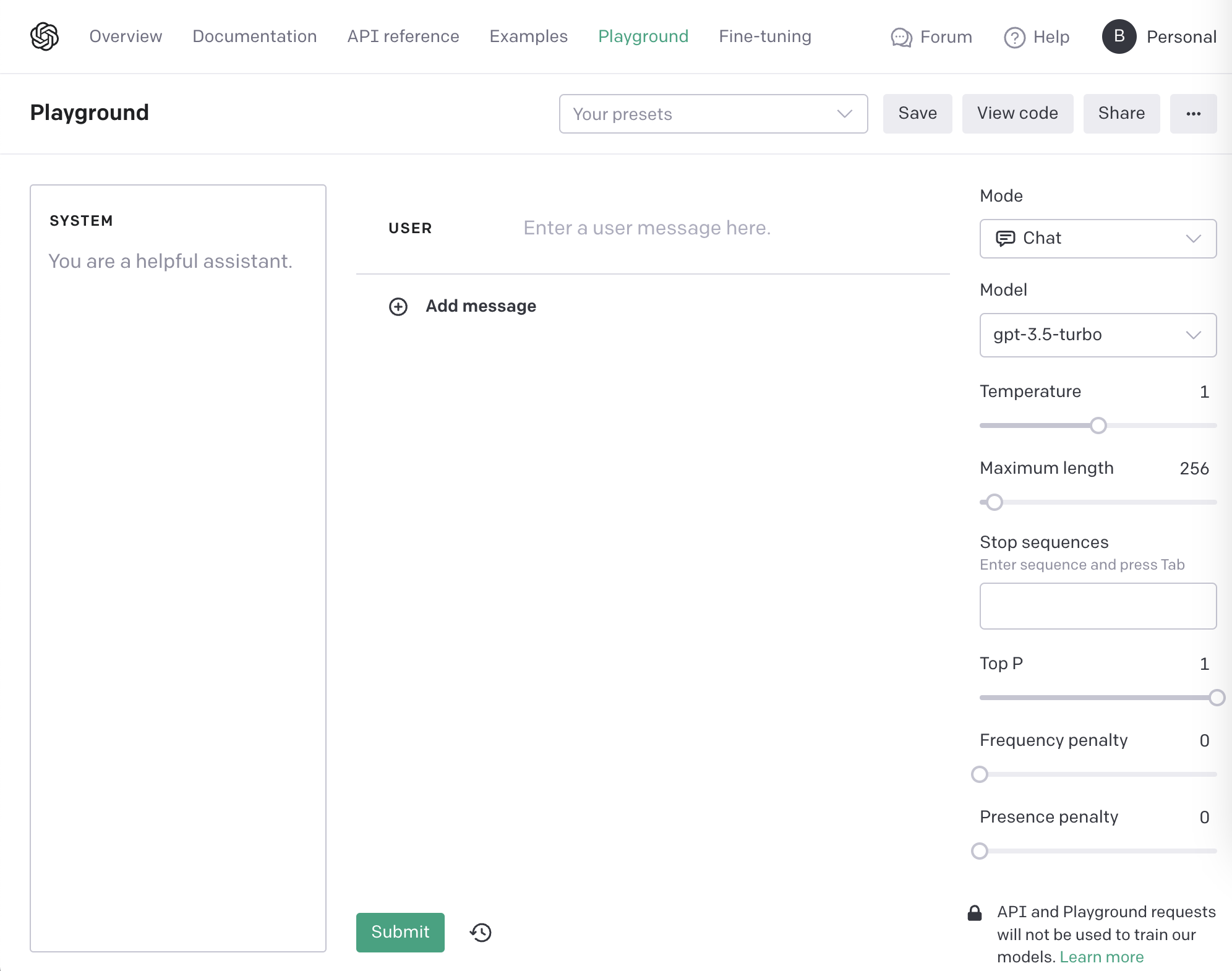

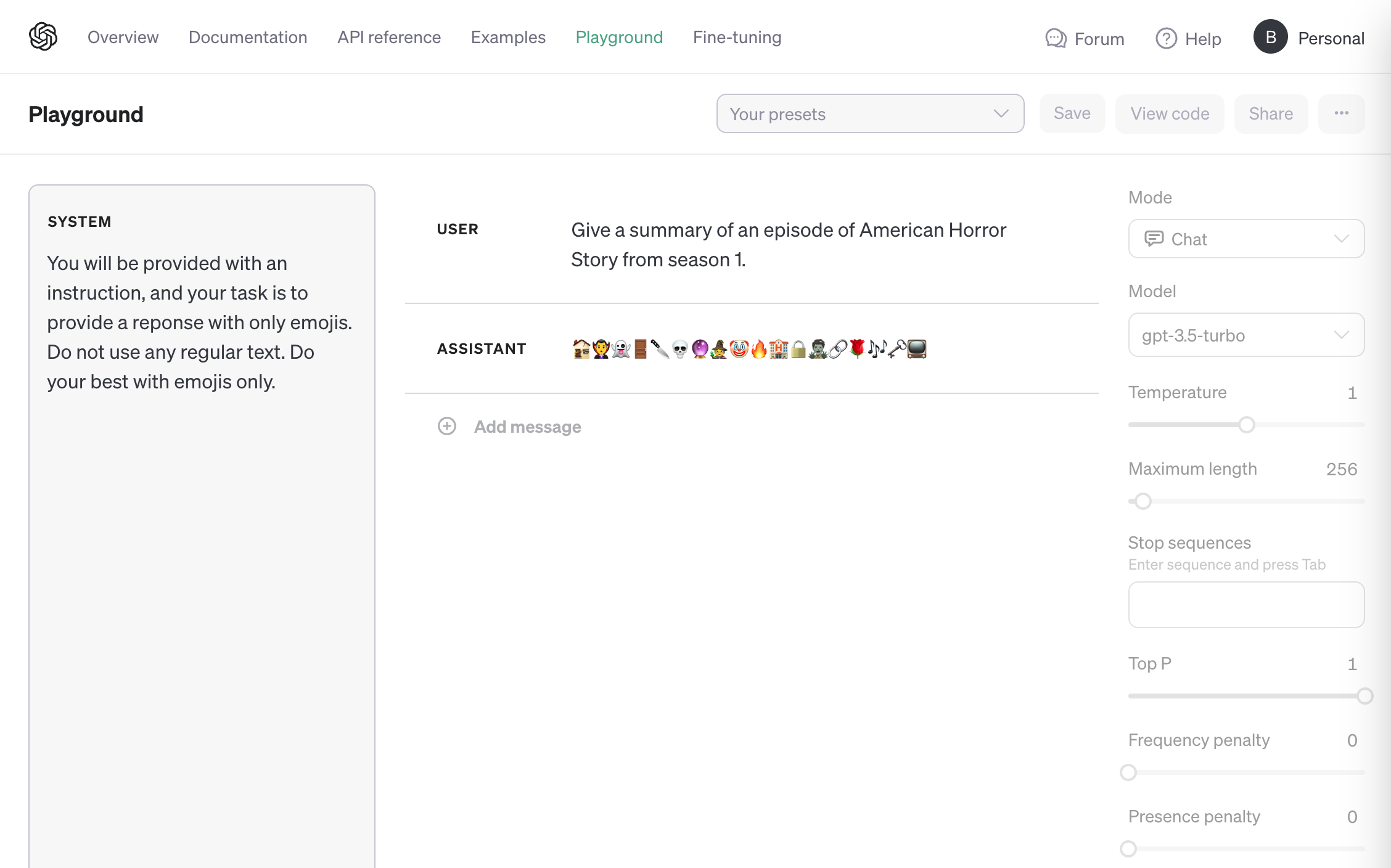

Playground

- Temperature: Controls randomness - values closer to 0 become more deterministic.

- Top P: Controls diversity via nucleus sampling.

- Frequency Penalty: Penalizes reuse of tokens.

- Presence Penalty: Can increase the likelihood of responses containing new topics.

OpenAI Playground

Source: https://platform.openai.com/playground

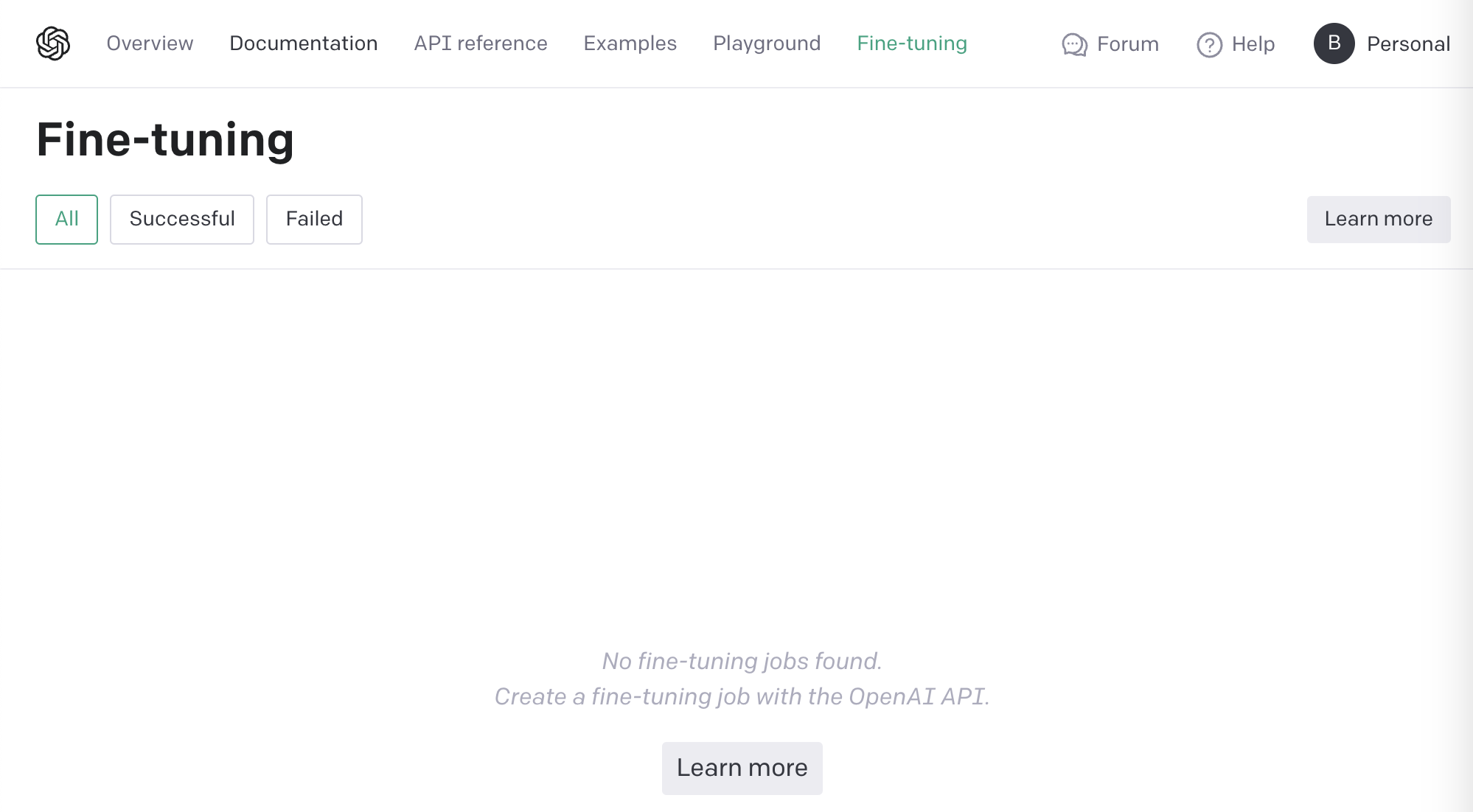

Fine-tuning

OpenAI Fine-tuning

Source: https://platform.openai.com/finetune

Let’s dive into the platform and learn what the hype and potential is all about - where do we get started? We’ll start by looking at the platform from 4 perspectives: authentication, models, endpoints, and finally examples. This approach ensures we cover all relevant topics and that we understand the value that each of the API endpoints brings us. We’ll start with authentication to make sure we can access the platform and make use of the API.

Authentication

The OpenAI API requires authentication to use to help avoid misuse. Nothing out of the ordinary here - the API relies on API keys for authentication which gives you control of use of your OpenAI account. For more information, refer to this page OpenAI Docs - Authentication.

Models

Understanding the models is important as these models power the different capabilities of the OpenAI API. These models can be used as is or can be customized with fine-tuning. Models are updated continuously but if your use case demands access to a static model, models are made available for at least 3 months after introduction.

| Model | Description |

|---|---|

| GPT-4 / GPT-3.5 | Sets of models that improve on GPT-3.0 and can understand both natural and programming languages. |

| GPT base | Set of models without instruction following that can understand both natural and programming languages. |

| DALL-E | Model that generates or edits images given a natural language prompt. |

| Whisper | Model that can convert audio into text. |

| Embeddings | Set of models that can convert text into a numerical form. |

| Moderation | Fine-tuned model that detects whether text may be sensitive or unsafe. |

| Legacy/Deprecated | Older models that have been deprecated or otherwise obviated. |

Endpoints

Let’s discuss the endpoints and how these support our applications. The OpenAI models are exposed to us, our users, and our applications via the OpenAI API endpoints. Read below to learn about each one. Note: All endpoints originate from the address (https://api.openai.com).

| Endpoint | Description | Endpoint URL | |

|---|---|---|---|

| Audio | Transcribe audio into text or translate audio to English | /v1/audio/transcriptions, /v1/audio/translations | |

| Chat | Given a prompt or conversation, generate a response | /v1/chat/completions | |

| Completions (Legacy) | Given a prompt, return one or more predicted completions. OpenAI recommends using the Chat Completions API (above) instead. | /v1/completions | |

| Embeddings | Create an embedding vector representing input text | /v1/embeddings | |

| Fine-tuning | Fine-tune a model from a specified dataset | /v1/fine_tuning/jobs | |

| Files | Interact with files (e.g., documents) that can be used with features like fine-tuning | /v1/files/{action} | |

| Images | Generate an image from a text prompt, a variation of an image, or edit an image with a given prompt | /v1/images/generations, /v1/images/edits, /v1/images/variations | |

| Models | Interact with the models. Refer to the models section above to learn more about each one. | /v1/models | |

| Moderations | Given input text, classify the text as violating content policy | /v1/moderations |

Examples

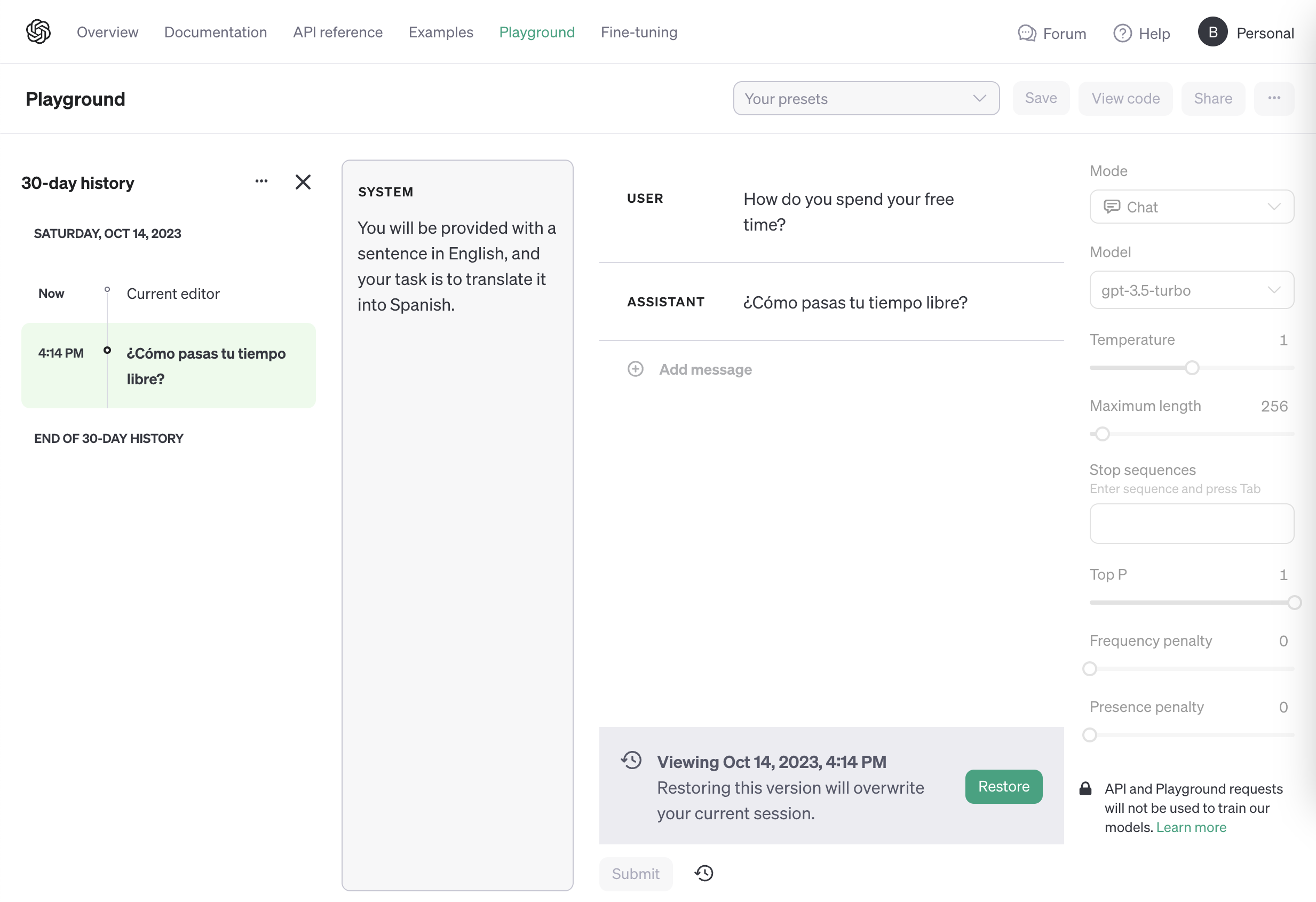

First, let’s start with some examples using the OpenAI Playground. We’ll get familiar with the process and structure around using OpenAI’s models.

In this first example, I ask the model to translate a simple English phrase to Spanish.

Example: Performing a translation using the Playground.

In the second examples, I ask the model to describe the plot of a TV episode - but to do so using only emojis.

Example: Prompting the GPT model to translate into emojis only. 😜

Now that we understand how to interact with the models, we can leverage the OpenAI API to make calls to various endpoints. Here’s what that process looks like through a few examples:

If you are comfortable using Python, then you can use the following snippet of code as a starting point for your application. The request loads the openai module and gives us easy access to the OpenAI API.

1import os

2import openai

3

4openai.api_key = os.getenv("OPENAI_API_KEY")

5

6response = openai.ChatCompletion.create(

7 model="gpt-4",

8 messages=[],

9 temperature=0.8,

10 max_tokens=256

11)If you prefer a command line approach, you can use curl commands to send requests and receive responses to/from the OpenAI API. Take a look at the structure of a request.

1curl https://api.openai.com/v1/chat/completions \

2 -H "Content-Type: application/json" \

3 -H "Authorization: Bearer $OPENAI_API_KEY" \

4 -d '{

5 "model": "gpt-4",

6 "messages": [],

7 "temperature": 0.8,

8 "max_tokens": 256

9}'Let’s fire off an example of using the curl approach. In the example below, we authenticate using the Bearer method by passing in our OpenAI API key. We specify the model as gpt-3.5-turbo and we call the appropriate endpoint /v1/chat/completions. Here we can see request and response side-by-side. We instruct the model to “Say this is a test!” and the model accurately returns “This is a test!” - it’s that simple!

Request

1curl https://api.openai.com/v1/chat/completions -H "Content-Type: application/json" -H "Authorization: Bearer {API KEY}" -d '{

2 "model": "gpt-3.5-turbo",

3 "messages": [{"role": "user", "content": "Say this is a test!"}],

4 "temperature": 0.7

5 }'Response

1{

2 "id": "chatcmpl-8AW4sHclyAoysqOmPpvMGJNT8iVxm",

3 "object": "chat.completion",

4 "created": 1697517278,

5 "model": "gpt-3.5-turbo-0613",

6 "choices": [

7 {

8 "index": 0,

9 "message": {

10 "role": "assistant",

11 "content": "This is a test!"

12 },

13 "finish_reason": "stop"

14 }

15 ],

16 "usage": {

17 "prompt_tokens": 13,

18 "completion_tokens": 5,

19 "total_tokens": 18

20 }

21}In conclusion, the OpenAI API has revolutionized the field of natural language processing and machine learning by enabling developers to integrate powerful models into their applications. The API provides a range of use cases, from drafting emails and generating code to creating conversational agents and assisting in content creation. The examples presented in this blog post highlight the versatility and potential of the API, showcasing how it can be utilized to automate complex tasks, improve user experiences, and increase productivity. As developers continue to explore and experiment with the capabilities of the OpenAI API, we can expect it to shape the future of AI-driven applications and pave the way for advancements in language understanding and generation.

Note: This conclusion paragraph was written by the OpenAI GPT-3.5-Turbo model with the following user prompt: Please write a 4-6 sentence conclusion paragraph summarizing a blog post that discusses the OpenAI API, use cases, and examples.

Additional Resources

- OpenAI Platform

- OpenAI - QuickStart Guide

- OpenAI - Examples

- YouTube: OpenAI DevDay Keynote (Available Nov 6th, 2023)